Application Transformation by Clarity

Many government systems rely on software that is more than a decade old, undergoing only minor modifications and patches due to overwhelming fragility and risk avoidance. A combination of operational necessity and the constrained capability of software vendors to iteratively deploy significant technical upgrades often compels leaders to prioritize mission continuity over critical advancements in application performance or maintainability. As a result, the software frequently goes without a major refactor. Failure of mission-critical government software is unacceptable, as it could result in the loss of lives or the inability to complete the mission. In a future conflict with a major military power, it will be essential that these applications are resilient to variable demand, and capable of being maintained and updated on an extremely rapid cadence with confidence to keep pace with the adversary and evolving targets.

This is why application transformation is so important.

Clarity Innovations transforms software so leaders don’t have to choose between fighting today and winning the conflict of tomorrow.

What Application Transformation is & why it’s important

In 2019, the Government Accountability Office (GAO) surveyed 65 federal legacy systems and identified that the 10 most critical ones (ages 8-51 years) cost $337 million a year to maintain. According to a report done by KPMG, an auditing firm, 41% of government IT systems are over 10 years old. With the front-running cloud deployment technology, Kubernetes, hitting mainstream in the government around 2017, that means that many of these applications were not designed to take advantage of modern deployment strategies. As government agencies adopt containerized platforms such as Kubernetes, many expect that the software will automatically inherit the benefits that come with it, when the opposite of this is actually true. Modernized platforms running legacy software typically run less optimally than when they ran on their legacy platform. In order for a modernized platform to be effective, the software running on that platform must first be transformed.

Transforming the codebase of applications to modern languages and frameworks to run on modern platform infrastructure is the goal. This is what will enable the outcomes that organizations are pursuing with a modern tech stack; more performance, more resiliency, less cost. Ensuring the software achieves these outcomes isn’t the only element of transformation that should be considered. Equally important is the transformation of the processes by which applications are developed, tested, secured, and deployed; these processes, much like the software itself, are equally antiquated. During a conflict where our software systems are actively being countered, a 3, 6, or 12-month deployment cycle will not be sufficient to meet the demands of the mission. Software must be updated in hours or days to keep pace with the ever-changing battlefield, and achieving this requires significant automation and guaranteed stability.

Software transformation is about enabling the warfighter and making the critical systems they rely on more secure, more resilient, and more performant to stay ahead of our adversaries. Transformation is about improving these systems in a way that does not require extensive downtime, millions of dollars, or years of development time. It’s about executing iteratively, with a lower cost of ownership, and in a matter of weeks or months. Transformation is challenging and it does require a specialized team possessing a unique set of skills.

Transformation Progression

Transforming applications in the modern age to cloud-like infrastructure generally is broken up into two primary phases, replatforming and modernization.

Replatforming

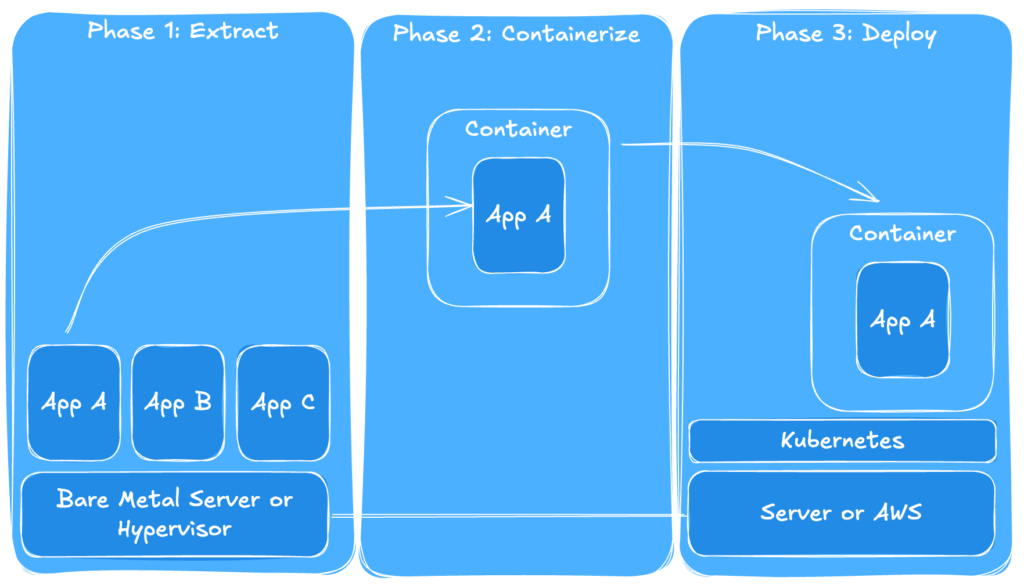

The transformation journey begins with replatforming—the process of moving one or more applications from a legacy deployment model into a cloud-like deployment. We achieve this by building automation for deployment to the cloud environment. This involves utilizing DevSecOps best practices, predominantly through the development of a Continuous Integration/Continuous Deployment (CI/CD) pipeline, that accelerates and streamlines application deployment. Figure 1 shows the process by which a typical replatforming would work. During this process a single legacy component will be extracted, containerized, then moved to the new environment. After successful deployment, the process would repeat for the remaining components.

Figure 1: Replatforming Process Example

One of the primary reasons for initiating this step first is to accelerate development iterations. The faster applications are deployed into a production-like environment and tested, the more rapidly necessary fixes are implemented to ensure smooth operation. This also accelerates iterations for the subsequent modernization step.

Replatforming can take days or weeks, depending on the application’s tech stack and complexity. However, for applications never intended for server-based computing, such as those designed as thick client applications, replatforming may take significantly longer and could even warrant skipping this step entirely, moving directly into modernization. Replatforming will ensure that your application can run in a cloud environment, but it will not be optimized for cloud operations upon completion. Zero-downtime deployments, horizontal scalability, and seamless failover for resilience require a much more in-depth refactor.

Modernization

In the second century, historian Plutarch composed a thought experiment, later named the Ship of Theseus, which posited:

Is a ship’s identity the same after all its planks have been replaced, one at a time, over time?

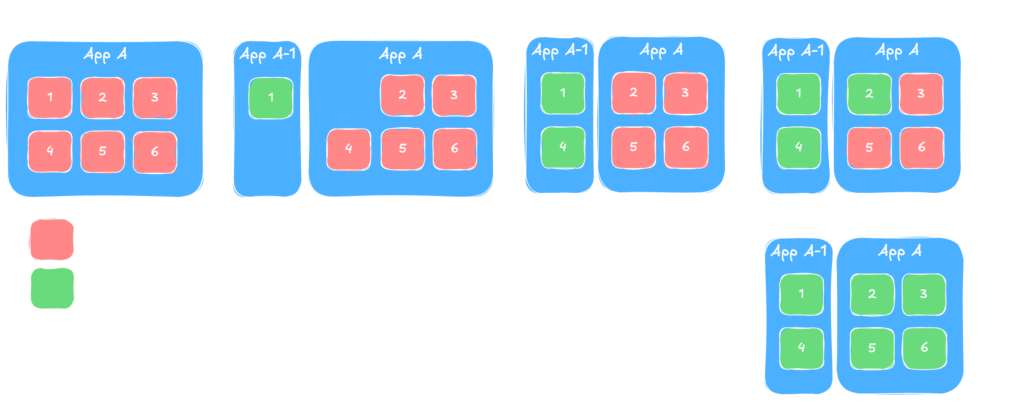

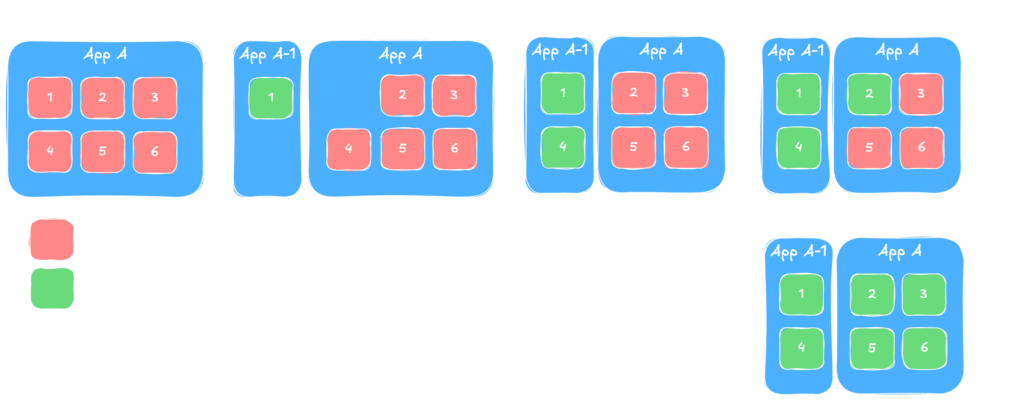

We will not answer this question in this paper, but software modernization is essentially the same concept. It is the process of iteratively refactoring an application, one component (or ‘plank’) at a time. This process is depicted in Figure 2. An application, “App A,” is made up of functional components 1 through 6. During modernization, the team would carefully extract each of these components, refactoring them to their new form. During this process, the team may decide to move one or more components into a separate application or service, depicted as “App A-1” on the figure. By the end of the process, you still have all the same functional components, but their makeup and interaction with each other has changed.

Figure 2: Modernization Process Example

The purpose of modernization is to optimize an application for cloud-native operations. This is where we achieve the outcomes promised by technologies like Kubernetes: zero-downtime deployments and highly resilient Software as a Service (SaaS). Modernization is considerably more intricate than replatforming. In replatforming, we can largely view the application as a black box, only examining its exterior to determine how to encapsulate it. Modernization requires the box to be completely opened and inspected. We need to understand the application’s constituent components to determine how they should be structured or how they should interact.

In some cases, like the one depicted in the figure above, this may necessitate the extraction of certain components into a separate service or application. Our teams utilize the 12-factor app methodology to determine how and which components should be refactored. This methodology is a set of principles that, when applied appropriately to software, result in software that has:

- resiliency to failure and attack, providing a persistent warfighter capability

- standardized interface design, allowing for rapid integrations with joint systems

- lower maintenance costs, making it possible to do the same or more in a budget constrained environment

Modernization may seem like a substantial, multi-year undertaking where results are not visible until completion. In a proper modernization refactor, this is NOT the case; they can take as little as weeks, with most only taking months to complete. Of course, some applications with older languages or frameworks may require significantly more work. No matter the complexity of the modernization, doing this process properly means that you will realize iterative improvements in production during the refactor, not a single influx of capabilities at the end of the effort. Leaders can choose transformation AND mission continuity.

Application Transformation at Clarity

Clarity is a mission-first software and data engineering contractor specializing in modern, cloud-first application transformation for the Department of War (DoW), IC, and Federal Agency communities. We utilize DevSecOps and agile methodologies to deliver resilient, secure software, with extensive experience migrating legacy applications to optimized cloud or secure on-premise environments. Our hundreds of engineers, many with prior experience from Pivotal Labs/AppTx, are experts in application transformation for various customers (DoW, Civilian, SLED, commercial).

A key differentiator for Clarity is our flexibility, avoiding the “opinionated platform approach” of competitors. We focus solely on the customer’s mission needs, building and deploying solutions across diverse platforms, including:

- Various Kubernetes distributions

- Virtual Machines

- Mobile environments

Core Principles

At Clarity, we have a set of core principals that we take into every transformation engagement. Although the approach and tools that may change from application to application, these core principals are fundamental to how we execute.

Architecture First: Clarity has a robust solution architecture practice to guide our software developers toward solutions that are both resilient and adaptable while remaining aligned with mission outcomes. We aren’t just about developing agile stories and having developers write code to them. We understand that the best software is architected, and that architecture drives how the software is implemented. This results in a thought out, resilient application.

Transform iteratively: At Clarity, we understand that transformation does not press “pause” on the mission. Because of this, we are strong practitioners in iterative refactors—the practice of modifying code in place over time. By transforming iteratively, we reduce that time to months and the systems stay operational while the software is being improved.

Automate and Document: Application transformation serves as an opportune time to rectify deficiencies in automation and documentation. Clarity’s goal in any application transformation is to ensure continuous progress toward the ‘next thing’. Things that we automate are:

- Tests: Judiciously developing automated tests using modern frameworks with methodologies such as Test Driven Development (TDD), where the tests are written before functional code.

- API Documentation: The best documentation is one that is tied to the code and does not need to be maintained separately. We understand the value of up to date API documentation, so we leverage OpenAPI libraries to generate synchronous and asynchronous API documentation so it always stays in sync with the code.

- Building and deploying: In a healthy development cycle, we are building and deploying our applications to production-like environments multiple times a day for evaluation. Because of this cadence, we ensure that as many manual steps are removed from this process to stay productive developing.

The Process at Clarity

When Clarity engages in application transformation we generally follow a standard outline. We understand that every organization is different, so we will tailor our approach and which of our processes and practices we employ for your unique organization. Figure 3 shows how the phases and milestones work on a typical application transformation. Here are some examples of the types of tools or practices we use during the different phases of transformation.

Figure 3: Typical Phased Process

Discovery

During the discovery phase our intent is to understand the organization, its software and its mission. The focus is not understanding the code and its function, but rather it’s about understanding how that code manifests in mission outcomes, and which of those outcomes are most important.

Value Stream Mapping: The process of tracing how business processes flow through your legacy application and service portfolio, revealing which services and systems are most central to delivering your business objectives. By mapping value origination and delivery processes end-to-end, you expose bottlenecks, dependencies, and redundancies in your architecture. Instead of modernizing everything all at once, we can target the applications and integrations that will provide the largest and most immediate return on your investment first, and surgically work our way to the rest of the system as needed.

Defining Measurable Objectives: Transformation efforts need clear objectives, measurable metrics, and concrete targets. We work with you to identify what success looks like for your organization and establish the framework for tracking progress. These vary widely depending on what matters most to you at the time. Some organizations prioritize improving system resilience or performance, while others focus on accelerating delivery or increasing user satisfaction. Examples include:

- Objective: Reduce time to production | Metric: Cycle time | Target: Weekly deployments

- Objective: Improve system reliability | Metric: System uptime | Target: 99.99% uptime over 6 months

- Objective: Increase customer satisfaction | Metric: Net Promoter Score | Target: Average NPS ≥ 9 after 6 months

When these objectives connect to measurable business impact—faster feature delivery enabling revenue growth, improved uptime reducing support costs, higher satisfaction driving retention—you can track and communicate the return on your modernization investment throughout the effort, not just at the end.

Technical Deep Dive: These generally start with a fairly extensive set of questions to understand the technical stack, technical shortfalls or constraints, as well a deep understanding of the current and target platforms. After that our discovery engineers will dig into the code, map it out, generate current state architectural diagrams, and get a sense for general complexity as well as internal and external interfaces, as these are generally areas that cause the most complexity during transformation.

Team Development

Building a transformation team is one of the most important phases of the transformation. Getting this step right is the difference between a fast and perfectly executed transformation, and one that drags on far longer than the customer would like. Here at Clarity we take this step very seriously, and ensure that the composition of the team is the right combination of product, design, software, cybersecurity, platform, and data engineering.

We look at our team to identify individuals with domain context and tech stack experience for your application. All of our application transformation team members are highly qualified consultants that are proven to work effectively within client organizations and existing engineering teams. Many of our team members have worked throughout the DoW and IC community and may have previous experience in your domain. We leverage this expertise wherever possible.

Some important qualities in our software transformation staff are:

- Highly experienced technically with a wide variety of technical stacks, languages, and platforms.

- Talented communicators with exceptional written and verbal communication skills, able to navigate complex politics and interpersonal environments.

- Able to rapidly learn and switch between multiple new mission contexts.

- Highly effective at navigating codebases they did not write, subduing the common pitfall of many software engineers to, “just rewrite it myself.”

- Well versed in architectural patterns, enabling them to employ proper architecture at every level, as well as the ability to communicate reasoning behind selected patterns.

Replatforming

During replatforming we work to move the application to the target cloud platform, but more importantly we set up infrastructure around how we will develop and test to ensure that future iterations are fast.

Test Automation: Constantly measuring our progress to ensure there are no regressions in performance or capability while also ensuring that we are hitting the KPIs we put together is paramount. In a world of continuous delivery, we need to ensure that the software that is being pushed to production is highly vetted.

Being agile isn’t about pushing changes to production and hoping for the best, it’s about building a system that pushes validated software efficiently. Test automation is central to that system. We will develop and augment tests for the application, ensuring that we can run them in an automated fashion throughout the transformation process. We do so using modern functional and non-functional testing frameworks to evaluate both the user functionality meets objectives, but also that the performance, scale, and resilience of the application also meets the mission need.

We leverage modern libraries and applications to develop comprehensive testing suites at all levels of the software application including:

- Unit testing

- Integration testing

- End-to-end testing

- Performance testing

- Chaos testing

- Security testing

Pipeline Development: During an application transformation engagement we will develop or augment a Continuous Integration/Continuous Deployment (CI/CD) pipeline to manage application release automation. This will include, but is not limited to, the building, testing, scanning, and dependency updating associated with your application. Some of the stages we like to include in the pipelines we develop are:

- Build: build of the application binaries or deployment files

- Test: testing of all levels, from unit to multiple system integration and end-to-end tests

- Source code scanning: Static code analysis scanners such as Fortify to notify developers consistently what findings their software has

- Binary scanning: Container or similar binary scanning for any additional vulnerabilities

- SBOM generation: Often required for an authority to operate, we will automatically generate the list of software and libraries needed for your applications.

- Dependency required updates: Using tooling such as Renovate, we are able to automatically check for needed dependency updates and even apply them to the baseline.

- Deployment to environments: Deploying your applications to different environments including development, staging, and even production when possible is crucial to improving cycle time. Taking time to automate these usually manual steps will save weeks of time per year.

Developing this kind of pipeline ensures deployed applications are more stable, more secure, and ensures it is deployed as rapidly as possible with as few humans in the loop as possible.

Modernization

This is where we will spend the most of our time, as it’s where we have the most opportunity to truly transform how the applications perform.Domain Driven Design: At Clarity we believe that one of the most effective ways to define a software architecture is around mission domains, and Domain Driven Design is a practice that can be used to map out that kind of architecture. DDD is a software development approach that focuses on understanding the core mission domain to create models that accurately represent its complexity and logic. It emphasizes close collaboration between developers and domain experts to build a common “ubiquitous language” that is used consistently in both conversations and code, bridging the gap between business requirements and technical implementation. Practicing DDD also allows for rapid learning on complex business or mission domains as well as building efficient teams that are experts in those domains. It lowers the communication barriers between developers and mission experts in order to more quickly come to common understanding and expedites implementation of new features. It also ensures that systems remain modular enough to withstand increased mission complexity over time.

Tooling and library development: Clarity Engineers like utilizing software development practices such as DRY (Don’t Repeat Yourself). We have found that older software applications, especially those in the DoW have a lot of unnecessary repetition. As we analyze and modernize your codebases we will be looking for areas to DRY up your codebases also easier to understand, smaller, easier to deploy, and less costly to maintain. To do this we will develop separate applications or libraries to separate your codebases into smaller, composable components. This means that over the aggregate of a large system we can dramatically reduce the maintenance cost of an application by ensuring logic is only written and maintained in one place. Additionally, we will look for opportunities to develop custom tooling. These often come in the form of custom test tooling, or service mocks. We develop these to reduce the dependencies on outside systems during development and lower level testing, ensuring that the development team can continue to move quickly.

Transforming your applications with Clarity

In an era where technological superiority is paramount, the modernization of critical government software is not merely an upgrade, it is a strategic imperative. Clarity stands ready to partner with the government customers, bringing unparalleled expertise and a proven, iterative approach to transform legacy applications into resilient, secure, and high-performing systems. We empower your warfighters with the cutting-edge capabilities needed to maintain a decisive advantage against any adversary. Engage with Clarity today to begin a transformation journey that secures tomorrow’s mission success.

To learn more or start the conversation reach out to sysdata@clarityinnovates.com

Gregory Ball

Gregory Ball is a Director of Solutions Architecture at Clarity with over a decade of experience centered on the large-scale transformation of legacy systems into cloud-native applications. He specializes in the architectural modernization of complex mission systems across the Department of War, specifically by migrating aging infrastructures into scalable, cloud-ready environments. Throughout his career leading programs of more than 100 personnel, he has successfully steered the technical strategy required to move outdated software into the modern era for the Air Force, Navy, and Army.